We co-organised SafeAI, the AAAI's Workshop on Artificial Intelligence Safety.

It was held in January 27, 2019, in Honolulu, Hawaii (USA) as part of the Thirty-Third AAAI Conference on Artificial Intelligence (AAAI-19) - one of the world's leading AI conferencea. SafeAI aims to explore new ideas on AI safety engineering, ethically aligned design, regulation and standards for AI-based systems. These regular workshops embed safety in the wider field, and provide a publication venue for high-quality AI safety research. 22 papers were presented, and can be read in full here.

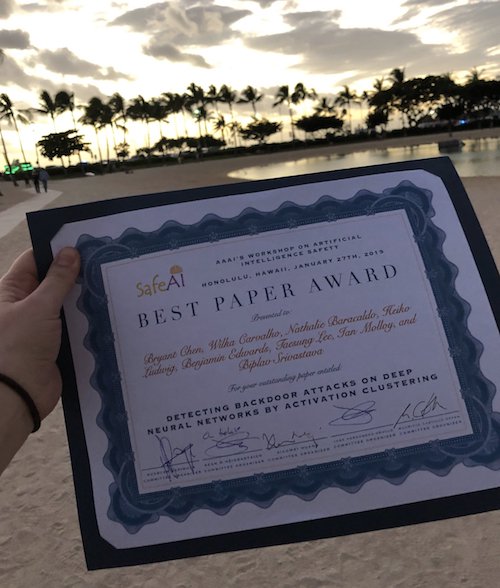

The Best Paper Award was won by a team from IBM Research: Detecting Backdoor Attacks on Deep Neural Networks by Activation Clustering - Bryant Chen, Wilka Carvalho, Nathalie Baracaldo, Heiko Ludwig, Benjamin Edwards, Taesung Lee, Ian Molloy, Biplav Srivastava

The other best paper candidates were:

- Impossibility and Uncertainty Theorems in AI Value Alignment (or why your AGI should not have a utility function) - Peter Eckersley (an Invited Talk)

- Requirements Assurance in Machine Learning - Alec Banks, Rob Ashmore

- Robust Motion Planning and Safety Benchmarking in Human Workspaces - Shih-Yun Lo, Shani Alkoby, Peter Stone

- Surveying Safety-relevant AI Characteristics - Jose Hernandez-Orallo, Fernando Martínez-Plumed, Shahar Avin, Sean O Heigeartaigh

The Workshop also featured Keynotes:

- Dr. Sandeep Neema (DARPA), Assured Autonomy

- Prof. Francesca Rossi (IBM and University of Padova), Ethically Bounded AI

and Invited Talks:

- Dr. Ian Goodfellow (Google Brain), Adversarial Robustness for AI Safety

- Prof. Alessio R. Lomuscio (Imperial College London), Reachability Analysis for Neural Agent-Environment Systems

The full proceedings - all 22 papers - can be found here.

Related team members

Related research areas

View all research areasRelated resources

-

Surveying Safety-relevant AI Characteristics

Peer-reviewed paper by Jose Hernandez-Orallo, Fernando Martınez-Plumed, Shahar Avin, Seán Ó hÉigeartaigh