Email: jlw84@cam.ac.uk

Personal website and blog: www.jesswhittlestone.com

Twitter: @jesswhittles

AI:Futures and Responsibility group website: www.ai-far.org

Jess works on various aspects of AI ethics and policy, with a particular focus on what we can do today to ensure AI is safe and beneficial in the long-term. She has a PhD in Behavioural Science from the University of Warwick, a first class degree in Mathematics and Philosophy from Oxford University, and previously worked for the Behavioural Insights Team advising government departments on their use of data, evidence, and evaluation methods.

Can you tell us about your pathway to CSER?

Like many people at CSER, my background is pretty mixed. I studied maths and philosophy as an undergrad, but when I graduated realised I wanted to work on something a bit less abstract and a bit more likely to have a positive impact on the world. I still found myself drawn to big-picture questions, though, so ended up going to do a PhD in behavioural & cognitive science, where I looked at what it really means to say people are “rational” and whether current psychology research actually paints as dire a picture of human rationality as is often claimed (spoiler: I don’t think it does.) In an attempt to ground myself even more in the ‘real world’ outside of academia, I spent a year during my PhD working part-time for the Behavioural Insights Team, who draw on findings from behavioural science to improve public policy. It was during this time I started getting really interested in what was going on in AI. I ended up working on several projects that involved advising government departments on their use of data science and machine learning and was struck by how keen policymakers were to be using these tools despite not really understanding what they could(n’t) do. At the same time, we were just beginning to see impressive progress in AI (most notably from DeepMind), and people in policy circles were beginning to think about the wider impacts of this technology and what might be needed to mitigate sources of harm. I was pretty convinced by that point that AI was likely to have enormous impacts on society, and it seemed like it might be an important moment to try and contribute to the discourse that was evolving around what to do about it. So when in early 2018 a postdoc position in AI policy came up at CFI (CSER’s sister organisation), I applied -- and I’ve been working on these issues across both CFI and CSER since then.

Please can you tell us about your main area of expertise?

My research broadly focuses on trying to understand how advances in AI will impact society in the long-term, and what we can be doing today to try and shape those impacts in positive directions. I’ve worked on this from many different angles, including: looking at the role of ethical principles and norms in shaping the impacts of AI; attempting to shape the terminology, questions and priorities that frame this emerging field of AI ethics and governance; and more recently exploring how we can more effectively anticipate and prepare for future impacts of AI. Given my very interdisciplinary background, a lot of what my research involves is collating, filtering, and synthesising information from really diverse sources, and talking to and convening experts with different perspectives to get a clear understanding of complex issues.

What is your current research focus at CSER?

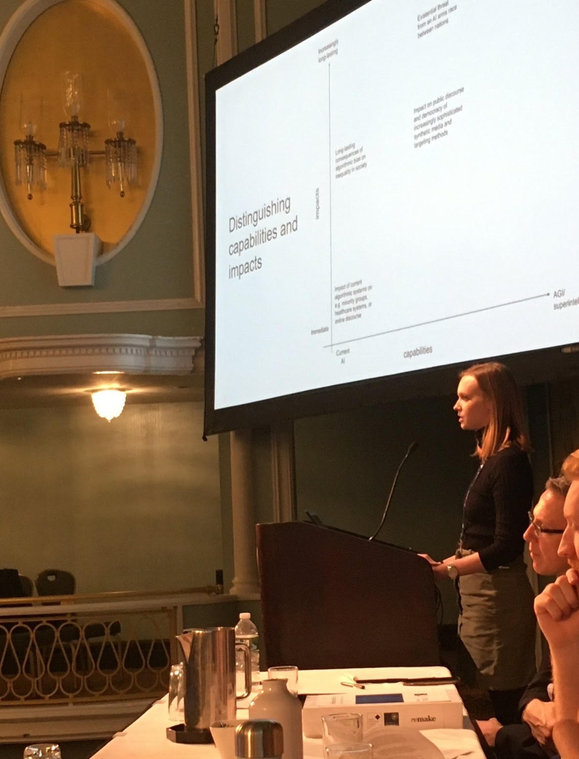

My current research aims to explore the potential long-term impacts and risks of AI in a way that is both grounded in current capabilities and trends, and inclusive of a wide range of perspectives and concerns.

Most concerns about the long-term impacts of AI focus on a scenario where AI attains equal or higher levels of intelligence than humans, and we therefore lose control of our future to AI systems. Though I think these scenarios are worth preparing for, I also think this is quite a narrow way of thinking how AI might impact our future and contribute to existential risk. There’s increasing recognition that AI systems falling short of human-level intelligence could have extreme impacts on society, but research and policy communities still lack a clear picture of what the most important scenarios and risks are, and therefore what is most important to do today. My research aims to clarify priorities for AI governance today by making this picture clearer.

At the moment, this work has three highly interconnected strands (from most to least developed):

- AI risk without superintelligence: I’m working with Sam Clarke on mapping out the different ways that AI could contribute to extreme risk without necessarily reaching the level of superintelligence, synthesising existing work on different aspects of this, and developing this into a research agenda.

- Mid-term AI: I’m interested in understanding how AI capabilities currently receiving substantial research attention (such as deep reinforcement learning or generative models), might result in real-world products and services over the next 5-10 years. I think there is an important ‘sweet spot’ here where it may be possible to foresee applications and possible impacts with enough advance warning to prepare for them.

- Participatory AI futures: I’m interested in developing and testing methods for involving a much greater diversity of voices in imagining the futures we want (and do not want) from AI. Most ‘future focused’ work on AI focuses on distilling knowledge from experts to make predictions, but the future is not inevitable - it’s something we are shaping with our (in)actions today. I think that if we want AI to be broadly beneficial, we need to develop much clearer shared visions of desirable and undesirable AI futures, and this requires participation from across all of society.

I’m also interested in identifying and advocating for more specific AI policy interventions/decisions that seem broadly beneficial today given our current understanding of AI risks and impacts in future. For example, I think a big challenge for AI governance at present is that there’s an information asymmetry between those developing AI and those attempting to govern it, and that improving governments’ capacity to monitor progress in AI development and applications may therefore be really important.

What drew you to your research initially and what parts do you find particularly interesting?

I’ve always been interested in decision-making and how we can make it better. During my PhD, I thought a lot about this on the individual level: how do we think about the biases and irrationalities in human decision-making, and what it might take to improve them? But since then, I’ve become more interested in how we make good decisions on a societal or institutional level since these seem even more important. My initial motivation to work on the societal impacts of AI therefore came from this perspective: AI is likely to have an enormous impact on our future, and so making the right decisions about how we develop and govern AI is hugely important. The other angle that drew me in initially was the possibility that developments in AI might be able to support us in making better decisions as humans, by complementing our strengths and limitations.

Over the past few years, I’ve become increasingly interested in the problem of how we can think clearly about the future (of AI), and how this can inform better decision-making today. I think this is hugely important for AI governance: AI is developing quickly, and we can’t just react to problems as they arise or the harms will get too big. This is why I’m so interested in developing richer visions of the future of AI: because I believe that thinking through different possible trajectories in depth can help us to do things like identify particularly important decision points, and make decisions today in ways that are robust to different future possibilities. We’re implicitly making assumptions about the future all the time when we make decisions, so making those assumptions explicit, and thinking about how they affect the impacts of our decisions, is really important.

What are your motivations for working in Existential Risk?

Ultimately, because I want to use my time and privileged position in society to try and make the world better for the future. I think there are lots of different ways to make the world better, and lots of problems in the world that need solving. But as someone who is naturally drawn to big-picture, philosophical thinking, working on existential risk provides a good balance -- it satisfies that part of me that wants to think about big, abstract, intellectually stimulating topics, while also allowing me to work in an area where there are very concrete things that can be done today, and keeping me grounded through engagement with communities beyond academia.

What do you think are the key challenges that humanity is currently facing?

There are a lot of important challenges humanity is facing today: tackling climate change, managing biological risks, and navigating the transition to a world with advanced AI are some I think are particularly important. But there’s also an overarching challenge: we need to improve our collective competence and institutional capacity to a point where we can identify, prepare for and respond to new challenges much more effectively than we do today. Otherwise, even if we manage to navigate the specific challenges, some other threat may well come along and catch us unawares at some point in the future.

I’m particularly concerned that there’s a mismatch between society’s current ability to make technological progress, and our ability to adapt our institutions and collective decision-making capacities to effectively deal with the new challenges those technologies bring. I’m no luddite - I ultimately think technology could be enormously beneficial, but I do worry that developing powerful technologies in the world we live in today is only inevitably going to go badly. So I think it’s really important we work to get the world to a place where powerful technologies can be used safely and beneficially: working to reduce inequality, develop ways to hold powerful actors accountable, give more people a voice in society, and promote international cooperation, and so on. This is an enormous challenge, but I’m excited about a lot of the work CSER is doing and particularly how many of my colleagues are balancing this need to tackle specific threats with the need to address more underlying causes.

For people who are just getting to grips with Existential Risk, do you have any recommendations for reading, people to follow or events to attend?

Others have already given lots of great recommendations for what to read and listen to around existential risk in general (e.g. see Matthijs and Tom’s recent recommendations). If you’re interested in getting to grips with the impacts and risks of AI specifically, a few recommendations:

- Kai Fu Lee’s “AI Superpowers” is a really interesting, well-grounded discussion of the potential long-term impacts of AI on power and inequality

- Remco Zwetsloot and Allan Dafoe’s “Thinking About Risks From AI: Accidents, Misuse and Structure” provides a really helpful starting points for thinking about some of the more complex and diffuse impacts AI could have on society

- The AI Index and State of AI Report both come out once a year and give a really good, detailed survey of what’s been going on with AI research, applications, and various other AI-related things over the past year - I think understanding this stuff is really important for thinking clearly about AI impacts and governance.